How accurate is API-based AI visibility tracking compared to ChatGPT UI?

As AI search gains importance, a new category of tools has emerged to track “AI visibility” or AEO performance. In parallel, many companies are attempting to build their own internal tracking workflows using APIs.

Most of them follow a similar approach:

- Take a set of prompts

- Run them through an LLM API

- Extract which brands show up

- Track visibility over time

On the surface, this feels logical. If the model is generating answers, why not measure those answers directly?

But there’s a fundamental disconnect in your objective vs your tracking setup:

Do API responses actually represent what users see in tools like ChatGPT?

Because if they don’t, then everything built on top of that data, competitive analysis, visibility rate, optimization strategy, is based on a distorted view of reality.

To test this, I ran a controlled analysis comparing API outputs with actual ChatGPT UI responses.

TL;DR

- The OpenAI API (without web search) surfaced 84% smaller brand universe across 300 responses (135 vs The ChatGPT UI’s 845) on the same prompts, same model family.

- API-based tracking creates two dangerous distortions: ghost competitors (real threats that don't appear in your data) and zombie competitors (brands your data over-indexes that barely register in reality).

- Adding web search to the API helps significantly, but still doesn't fully replicate what users experience.

- If you track AEO through raw API calls, you cannot see the results of your own optimization work, the feedback loop is long.

The Core Problem

Most companies tracking AI search visibility are making a foundational error. A structural one that corrupts data at the root.

The assumption is this: if I call the ChatGPT API with my target prompts and count brand mentions, I'm measuring what users see.

That assumption is wrong.

The API and ChatGPT UI are not the same product and the gap between them is structural. If your AEO strategy relies on API-only tracking, you’re not actually measuring AI visibility. You’re building dashboards on top of a distorted version of reality and the outputs won’t reflect what real users see.

What We Tested and How

To understand exactly how wrong the numbers get, I ran a controlled analysis across 5 brands and 35 prompts, mostly bottom-of-funnel queries, for 10 consecutive days. Every prompt was run across three conditions simultaneously.

- ChatGPT UI

- GPT 5.4 API

- GPT 5.3 Chat API with web search tool

The 5 brands:

- Zoomcar: Self-drive car rentals (India)

- Hatica: Engineering analytics

- Trumpet Media: Marketing agency

- CleverTap: Mobile marketing

- Delhivery: Logistics

The 3 conditions:

- ChatGPT UI: Prompts run through ChatGPT's native interface with web search on. This is what a real buyer experiences.

- GPT 5.4 API, no web search: Prompts run via the OpenAI API with no system prompt and web search not explicitely enabled. This is how most AEO tools and internal tracking setups work.

- GPT 5.3 API, web search enabled: Prompts run via API with web search explicitly turned on

For each response, I extracted every brand mentioned and measured total mentions, unique brands, average brands per answer, Shannon entropy and mention rate per brand.

💡 Shannon entropy: H = -Σ p(x) log p(x), measures how concentrated brand mentions are. Higher entropy means mentions are spread across many brands. Lower entropy means the same names keep repeating.

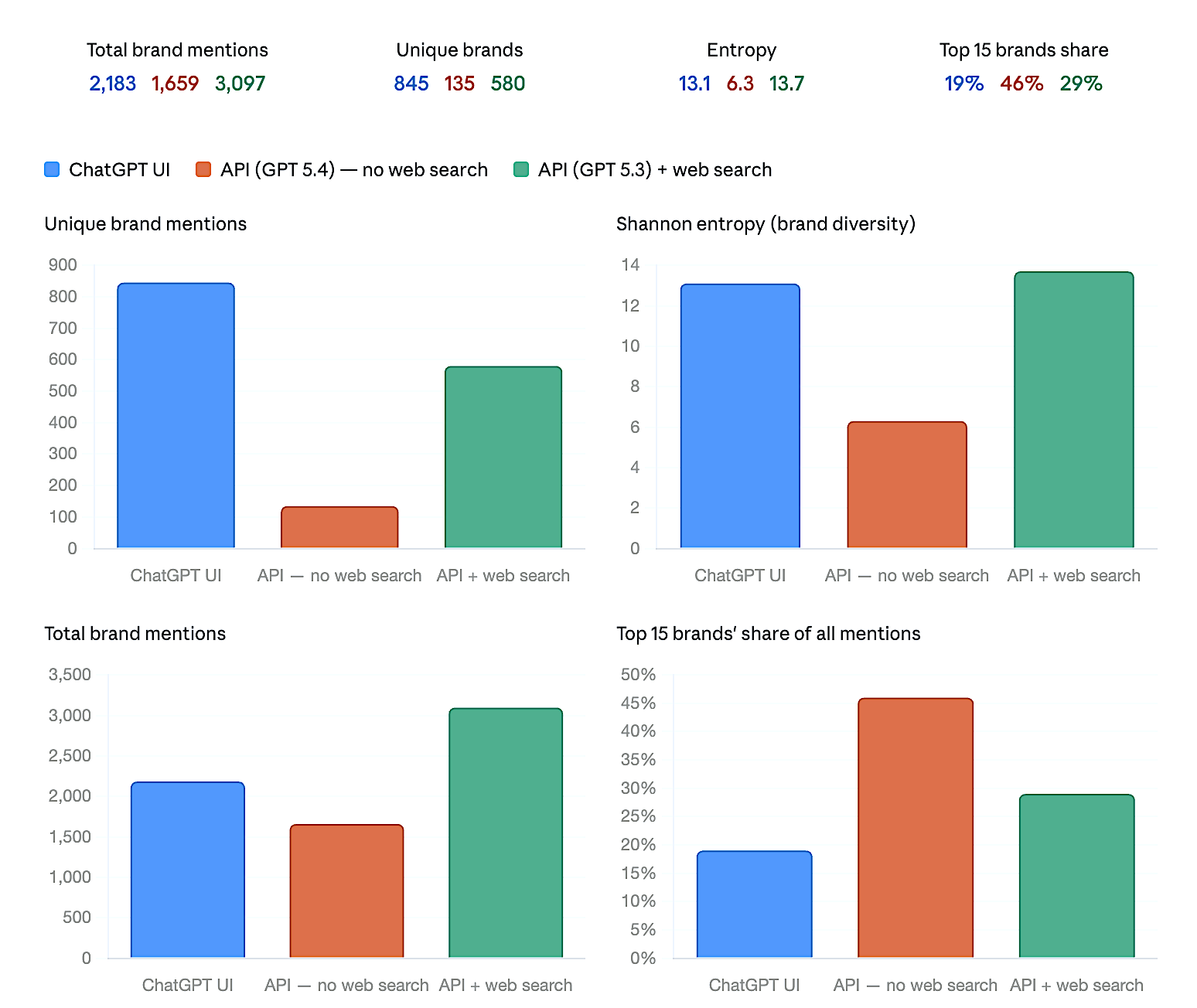

Finding 1: The API operates in an 84% smaller brand universe

| ChatGPT UI | API, No Web Search | API + Web Search | |

|---|---|---|---|

| Total brand mentions | 2,183 | 1,659 | 3,097 |

| Unique brand mentions | 845 | 135 | 580 |

| Avg brands per answer | 7 | 6 | 10 |

| Entropy | 13.1 | 6.3 | 13.7 |

| Top 15 brands' share | 19% | 46% | 29% |

| Top brand's share | 2% | 6% | 4% |

The number that stopped me: the API without web search surfaced 135 unique brands. The ChatGPT UI surfaced 845.

Same prompts. Same model family. Completely different competitive landscapes.

Does the ChatGPT API reflect what users actually see? No. The API without web search surfaces 84% fewer unique brands than the ChatGPT UI on identical prompts.

Finding 2: The API artificially concentrates brand visibility

Shannon entropy tells the full story:

- API without web search: 6.3

- ChatGPT UI: 13.1

- API with web search: 13.7

The API operates from the model’s internal knowledge without fresh grounding, so it tends to converge on the same high-probability set of brands.

The top 15 brands account for 46% of all mentions in API responses. In the UI, they account for just 19%. The single most-mentioned brand holds 33% mention rate in API responses and only 17% in the UI.

This has a direct impact on how visibility gets measured.

If your brand happens to be part of that high-probability set, it will show up repeatedly across API responses, inflating your perceived share of voice. If it isn’t, it will rarely get selected, so your visibility appears artificially low, even if you’re present in real user-facing answers.

Finding 3: Ghost competitors - threats your data erases

Braze, a mobile marketing company, competes directly with CleverTap, one of the brands I tracked. In the UI, ChatGPT retrieves current web content, recent comparison articles, analyst coverage, G2 reviews, product roundups. Braze has been gaining significant enterprise market share and that shows up clearly in current web content.

The API knows none of this. Its training data has a cutoff. If a competitor's prominence grew substantially in the past 12–18 months, the API is simply unaware it happened.

Is it true that API-based AEO tracking can miss real competitors entirely? Yes. Competitors whose market position has grown significantly since the model's training cutoff may show zero mentions in API data while appearing frequently in what buyers actually see.

If you're CleverTap's marketing team tracking competitive intelligence through API calls, Braze does not exist in your data. It exists in your buyers' answers.

Finding 4: Zombie competitors - threats your data invents

The inverse problem. Myles, a self-drive car rental brand in India, had 29% share of voice in API responses and only 6% in UI responses.

The model's training data reflects an older competitive reality when Myles was a prominent player. Recent web content tells a materially different story about the brand's current market position. The UI pulls that current picture. The API does not.

The correction is visible as you add retrieval. Myles normalizes progressively:

- API, no web search: 29%

- API + web search: 18%

- ChatGPT UI: 6%

Each step toward real-time retrieval brings the data closer to reality.

How does API-based tracking distort competitive data? It over-represents brands that were prominent when the model was trained and under-represents brands that have risen or fallen since, sometimes by a factor of 5x or more.

Finding 5: Web search on the API helps - but doesn't close the gap

Next, I ran prompts through the GPT 5.3 Chat’s API with web search enabled, results improved significantly. Entropy jumped to 13.7, unique brands went from 135 to 580 and total mentions climbed from 1,659 to 3,097.

But the competitive landscape still diverged from the UI in important ways.

Drivezy appeared at 20% share of voice in the API with web search but only 5% in the UI. Shiprocket and WebEngage showed prominently in API + web search responses but didn't make the top brands in the UI.

Even though both the API and ChatGPT UI can use web search, they don’t use it the same way. In the API, web search is an optional tool the model may call inconsistently. In the UI, retrieval is more tightly integrated into the system, with additional layers that influence which sources are used, how they are ranked and how the final answer is constructed.

Does enabling web search on the OpenAI API make it equivalent to the ChatGPT UI? No. It significantly improves accuracy, but the retrieval implementation differs. API-based web search and native ChatGPT web search are not equivalent measurement environments.

The Broken Feedback Loop

There's a second problem that matters as much as measurement accuracy.

AEO is not passive observation. You take actions: publish new content, earn citations, build topical authority, place coverage in the right publications. The entire point is that those actions should move your visibility scores.

If you're tracking through the API, you will never see the results of your own work.

New content doesn't exist in the API's world until the model retrains. Retraining cycles are months apart and completely opaque, you don't know when they happen or whether your content was captured. You could run a well-executed AEO campaign and see zero movement in your tracking data.

The UI reflects the web as it exists today. Your work shows up. Your competitors' moves show up. The feedback loop is intact.

What This Means for Your Setup

If you're evaluating a commercial AEO tool:

Start with a simple question: where do the answers to your prompts actually come from?

If you're an in-house team tracking your own brand:

Raw API calls are not a proxy for user experience. They are a different product. If you want to do AEO, you need to integerate UI scraping into your product. Else your analytics is distorted and you would be solving a problem that may or may not exist

Frequently asked questions

What is the difference between the ChatGPT API and the ChatGPT UI for AEO tracking?+

The ChatGPT UI uses live web search by default to retrieve current web content before generating a response. The API, without web search explicitly enabled, relies solely on the model's training data, a frozen snapshot of the web from months ago. This difference means they surface substantially different brand landscapes, with the UI showing up to 6x more unique brands.

Does API-based AEO tracking accurately measure brand visibility?+

No. API-based tracking without web search reflects the model's training data, not the current web. Because most AEO actions, new content, earned media, third-party citations, only show up in the live web, API-based tracking cannot detect the results of your optimization work until the model retrains, which happens on an opaque cycle months apart.

Why do some competitors appear in API responses but not in real ChatGPT answers?+

These are what we call "zombie competitors", brands that were prominent in web content at the time the model was trained but have since declined in relevance. The API surfaces them because it only knows the past. The UI surfaces them less because current web content tells a different story.

Why do some competitors appear in ChatGPT UI results but show zero mentions in API responses?+

These are "ghost competitors", brands that have gained market prominence after the model's training cutoff. The live web reflects their current position. The API doesn't know they've grown. This creates a blind spot where real competitive threats are invisible in API-based tracking.

Is Shannon entropy a useful metric for evaluating AEO tracking accuracy?+

Yes. Shannon entropy measures how concentrated or diverse brand mention distributions are. A lower entropy score means the same brands are mentioned repeatedly; a higher score means mentions are spread across a broader set. In this study, the API without web search scored 6.3 versus 13.1 for the ChatGPT UI, indicating the API recycles a much narrower set of brands regardless of what's actually happening in the market.

Does adding web search to the OpenAI API fix the tracking problem?+

Partially. Enabling web search on the API significantly improves the breadth of brand coverage — unique brands jump from 135 to 580 in this study — and entropy aligns much more closely with UI behavior. However, the specific implementation of web retrieval in an API call differs from how ChatGPT natively executes search in its interface, and competitive distributions still diverge in important ways.

How many unique brands does the ChatGPT UI surface compared to the API?+

In this study across 35 prompts and 300 responses per condition, the ChatGPT UI surfaced 845 unique brands. The OpenAI API without web search surfaced 135. That is an 84% smaller brand universe.

Related articles

Do AEO tracking tools actually mean anything if AI personalizes every answer?

I’ve been speaking to a lot of teams recently and it’s clear there’s a gap in how AEO (Answer Engine Optimization) is understood.

How to measure AI search driven traffic?

Tally, the form builder, just published their $5M ARR update. They added $1mn in ARR in just 6 months, with a team of 11 and no crazy marketing spend.

Do you need to invest in AEO?

Google search is growing. Traffic is not.